The OpenClaw Fiasco and the Demand for AI Agents + Get Started with Claude Code

Agents can book travel, email, run commands, and run your life. This power needs guardrails. Here’s the AI literacy stack and why agent management is the next big market.

Welcome to Excellent AI Prompts | Issue #251

If there’s one thing to take from this week’s Clawdbot → Moltbot → OpenClaw saga, it’s this: the demand for personal autonomous agents is massive. And right behind it, equally massive, is the demand for AI agent management.

The interesting part is that this (OpenClaw) project was built primarily using Claude Code.

I started using Anthropic’s Claude the week after its release. I signed up for Pro the day it launched in September 2023. So I say this with nearly two years of daily experience: every release has been a considered, clear improvement over the last. Each one better than what came before.

But Claude Code and Cowork paired with Opus 4.5? It’s so good that it makes me feel like we’re in the messy middle of an Isaac Asimov story.

Opus 4.5 — Why This One Is Different

Opus 4.5 dropped on November 24, 2025. Same week as Google’s Gemini 3 Pro. Same month as OpenAI’s GPT-5.2. Every major lab shipped their best model within 30 days of each other.

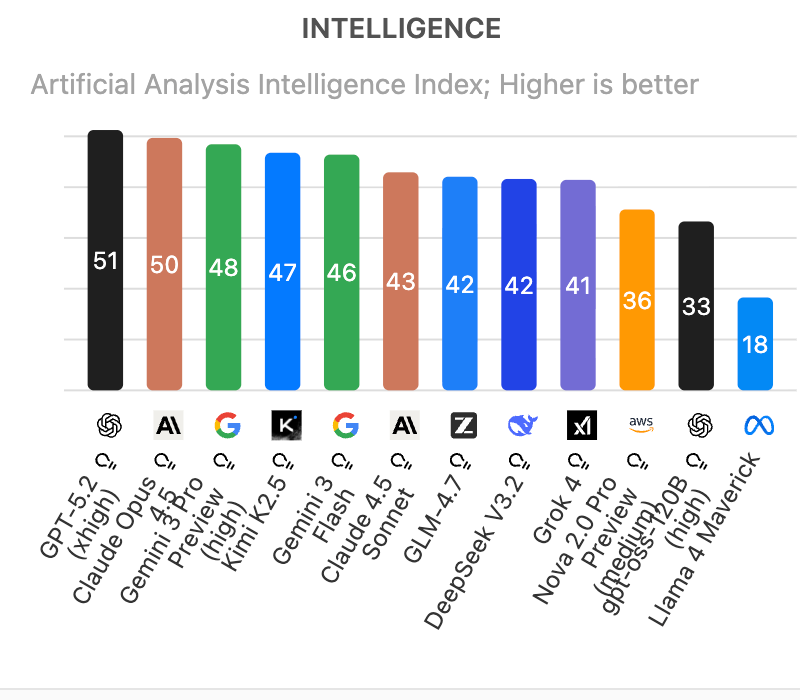

So naturally, everyone wants to know: who won?

Here’s what the scoreboard says. On the Artificial Analysis Intelligence Index, Opus 4.5 ranks as the second most intelligent model in the world, tying OpenAI’s GPT-5.2 and trailing only Google’s Gemini 3 Pro. On SWE-bench, the benchmark that tests whether a model can actually solve real software engineering problems, Opus 4.5 scored 80.9%; it is first model to ever cross 80%. And on Anthropic’s own internal engineering exam, the one they give to candidates they’re considering hiring, Opus 4.5 scored higher than any human who has ever taken it.

Those are impressive numbers. But you don’t need to understand benchmarks to understand what’s happening here. What matters is what people who build with these models every day are saying.

Nathan Lambert, a senior research scientist at the Allen Institute for AI and author of the Interconnects newsletter, described working with Opus 4.5 this way: it feels like the commodification of building. You type, and outputs are constructed directly. McKay Wrigley, founder of Takeoff AI, said flatly: Opus 4.5 is a winner. And Anthropic will keep winning. He predicted they could pass OpenAI in valuation by early 2027. Based on all the data I’m seeing, he’s probably correct.

Enterprise customers are seeing it too. Rakuten, one of the world’s largest e-commerce platforms, found that agents built on Opus 4.5 could improve their own performance autonomously, hitting peak quality in four rounds of iteration. Other models couldn’t get there in ten.

And the price collapsed. Opus 4.5 costs one-third of the previous version. The model that used to be reserved for special occasions is now priced for everyday work.

But the intelligence is just the hook. While everyone was staring at the benchmarks, Anthropic was assembling a much bigger system behind the scenes.

What Anthropic Built While Everyone Was Watching the Benchmarks

Over the past year, while Twitter was arguing about Elo ratings, Anthropic was quietly solving the problem that killed OpenClaw before it even launched. They didn’t just ship a smarter chatbot; they shipped a nervous system.

They released three things that act as the “prefrontal cortex” for AI:

The Nervous System (MCP): A universal connection protocol that lets Claude plug into your tools and data securely. Instead of giving an agent your raw login credentials (like OpenClaw demands), MCP acts as a secure handshake. It’s the difference between giving a valet your valet key vs. giving them ownership of the car. They donated it to the Linux Foundation in December 2025.

The Corporate Memory (Skills): A system that teaches Claude how you work. Not through endless prompting, but through structured “instruction sets” that live in the background. It turns “generic smarts” into “specialized competence.”

The License to Act (Agent SDK): This is the big one. The SDK, software development kit, gives Claude the authority to execute tasks, but, and this is critical, within a cage. It allows for “Sandboxed Execution,” meaning if the agent tries to run a malicious command, the SDK most likely catches it before it hits your hard drive or the world wide web.

Put them together and you don’t have a chatbot. You have an AI employee. One that comes with HR policies, security clearance, and a job description already installed.

But today, I want to review what happens when someone builds an agent without that infrastructure or an ounce of AI literacy.

OpenClaw, The Most Exciting, Terrifying Thing Happening in AI Right Now

I need you to hold two truths in your head at the same time.

Truth one: OpenClaw is extraordinary.

It is the closest thing we have to the personal AI agent people have been dreaming about since the first iPhone. It runs on your own computer, connects to your WhatsApp, your email, your calendar, your Slack. It doesn’t just answer questions. It books your dinner reservation. It responds to your emails. It manages your schedule. It screens your calls. One user has it running his morning briefings, managing invoices, scheduling meetings, and texting his wife when their kids have homework due.

One hundred thousand GitHub stars in under a month.

Coverage in TechCrunch, WIRED, Forbes, Ars Technica.

Truth two: OpenClaw is a security nightmare.

And I don’t mean that figuratively. It can run shell commands, read and write files, and execute scripts on your machine. To do its job, it needs root access, It has access to your accounts, your credentials, your messages, your files, and more. Everything.

Security researchers found hundreds of instances exposed to the open web. Of the ones examined manually, eight had no authentication at all, giving full access to run commands and view configuration data. API keys were leaked in plaintext. During the chaotic rebrand from Clawdbot to Moltbot, someone hijacked the old GitHub username and started creating fake cryptocurrency projects under the creator’s name. One fake token hit a $16 million market cap before crashing.

A security researcher uploaded a poisoned Skill to ClawdHub, the agent’s skills library, artificially inflated the download count to over 4,000, and watched developers from seven countries download it. It was a proof of concept. He could have executed commands on every single one of those machines.

Cisco’s security team published their assessment the same week. Their headline: Personal AI agents like OpenClaw are a security nightmare.

Green Light for You

I don’t want you to look at OpenClaw and see a failure. I want you to see a massive, flashing green light.

This was a stress test for how people will deal with an agentic world.

We tried to run a supersonic jet on a gravel driveway. The lack of permission controls, sandboxing, and supply chain verification meant there was nothing standing between an “amazing capability” and “root access to ruin your digital life.”

It proved that the distance between a “weekend hobby project” and a global phenomenon has officially collapsed to zero.

Peter Steinberger, the creator of OpenClaw, built a tool in three months that moved markets. That is the leverage available to anyone willing to look under the hood.

This is why I will keep beating this drum until my hands hurt: AI literacy is not optional no matter what your views on AI are.

Think of it this way. You don’t need to know how to rebuild a car engine. But if someone hands you the keys to a vehicle that drives itself, you better understand what the brake pedal does, where it’s going, and what happens if you fall asleep at the wheel.

OpenClaw is that car. It is incredibly capable, genuinely useful, and absolutely not something you should be operating without understanding the basics.

Here’s my short list. The bare minimum AI literacy stack for anyone deploying or even considering an autonomous agent:

Tool knowledge: What is this tool and what is meant to help me do?

Permissions: What are you giving this tool access to? Can it read your files? Send messages on your behalf? Execute code on your machine? If you don’t know, you aren’t ready.

Sandboxing: Is the agent contained? If it makes a mistake, how far can the damage spread? If the answer is “everywhere,” you have a problem.

Authentication: Is the agent’s interface exposed to the internet? Can anyone connect to it, or just you? If you don’t know, assume the worst.

Human-in-the-loop: At what points does the agent stop and ask you before acting? If the answer is “never,” you are in for a wild and dirty ride, my friend.

Supply chain: Where did the Skills or plugins come from? Did you build them? Or did you download them from a public library because they had a high star count? (We just saw what happens when you trust star counts.)

The Weekend Builder’s Challenge

You cannot manage these agents if you are afraid to touch them. I want you to spend one hour this weekend building your own “Agent.” Not to run your business, but to understand the physics of the tool.

We are going to use Claude Code, Anthropic’s command-line tool.